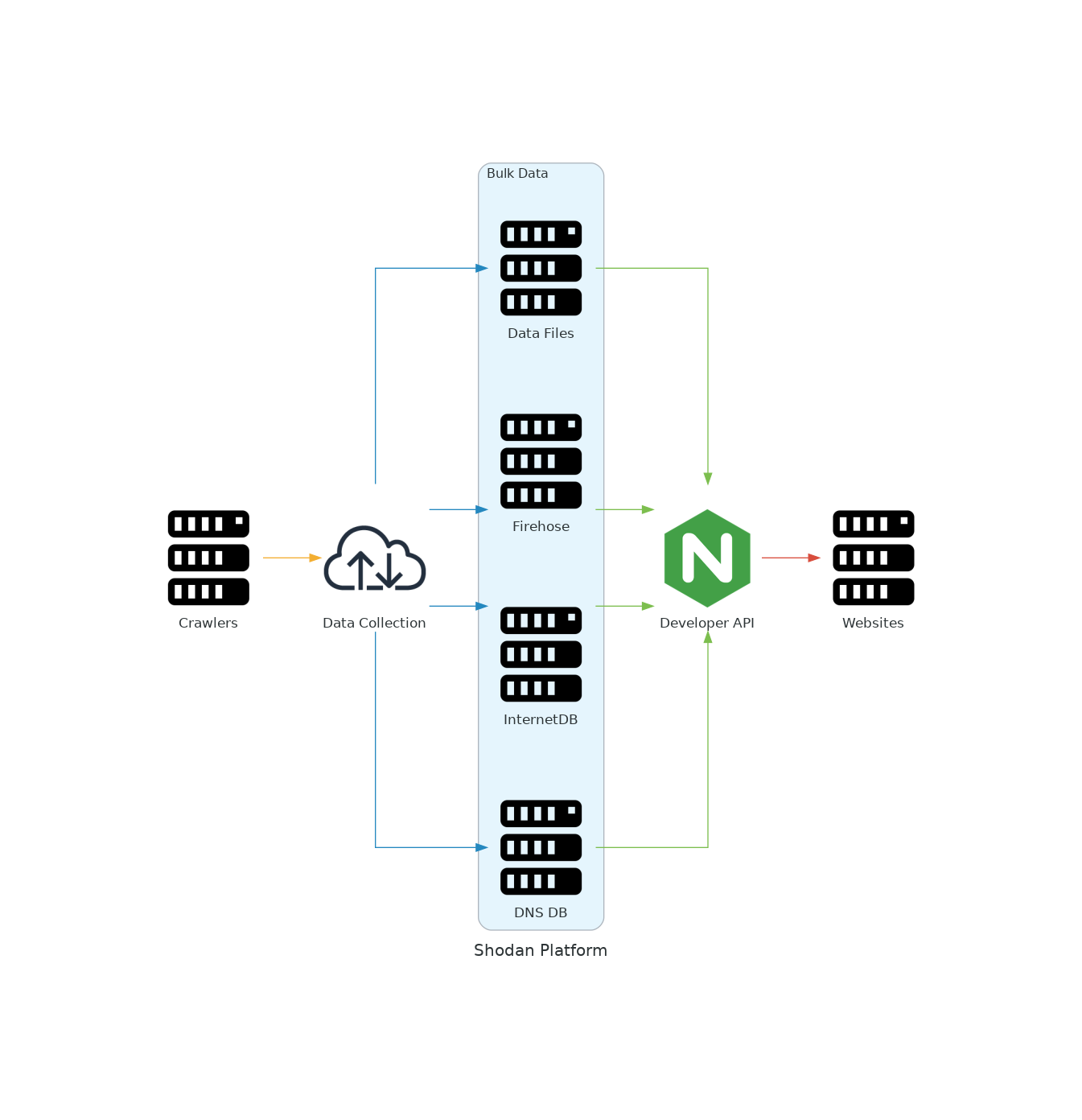

All Shodan websites are built entirely on-top of the same public Shodan API that everybody else uses. This means that anything you can do via the website you can also do programmatically using the API. Below is a high-level diagram showing the flow of data in the Shodan platform:

Shodan Monitor works by creating a private/ filtered firehose on your behalf, subscribing to it and then sends out notifications based on the triggers that you've defined. Everything you see in Shodan Monitor is based on the data from that private firehose data feed. If you'd like to consume the data feed yourself then you can do that using 2 options:

$ shodan stream --alert=all

The --alert optional parameter accepts either an alert ID or the special keyword all which tells the firehose to return data about all monitored networks. Simply streaming the data isn't extremely useful though. Lets actually store all the banners in a local directory:

$ mkdir shodan-monitor-data

$ shodan stream --alert=all --datadir=shodan-monitor-data/

Now we're subscribing to the private firehose and all banners will be stored in the shodan-monitor-data/ directory. The CLI will automatically rotate the files in the directory so the data will be broken into daily files.

The other way to grab data is by connecting to the stream.shodan.io API (aka firehose). If you want to write your own code to connect to the firehose and not use an existing Shodan library then please check out the Network Alerts methods on the following page:

https://developer.shodan.io/api/stream

We would recommend using the official Python library for Shodan which makes it easy to connect to the firehose:

from shodan import Shodan

API_KEY = ''

api = Shodan(API_KEY)

for banner in api.stream.alert():

print(banner) # Do something interesting here

In the above code, we connect to the firehose and ask it to return data for all monitored networks. We could also subscribe to a specific network alert by providing an optional aid parameter:

for banner in api.stream.alert(aid='DF8SFl3B'):

It's trivial to actually consume the data from the firehose once you understand the architecture and how it fits into the overall picture. Here is a script that can be used as a Scripted Input in Splunk with an interval of 0 (i.e. it will run continuously). The below Python script will subscribe to your private firehose and pull any discovered banners for your network(s) into Splunk. It doesn't require any additional dependencies:

from json import dumps

import requests

# Enter your Shodan API key

API_KEY = ''

def main():

response = requests.get('https://stream.shodan.io/shodan/alert?key={}'.format(API_KEY), stream=True, verify=False)

for banner in response.iter_lines(decode_unicode=True):

print(banner.decode())

if __name__ == '__main__':

main()

All banners get published to your private firehose - not just ones that match a trigger criteria.

Shodan has partnered with Gravwell to provide a way to store all Shodan Monitor events within your own data lake. Simply run the following command to install the Shodan ingester in your Gravwell cluster:

apt install gravwell-shodan

The installation process will ask for your API key and afterwards you will start storing all Shodan Monitor events. Gravwell manages the connection to the Shodan API and efficiently stores the captured data.